AI Killed Stock Photos. Then It Became One.

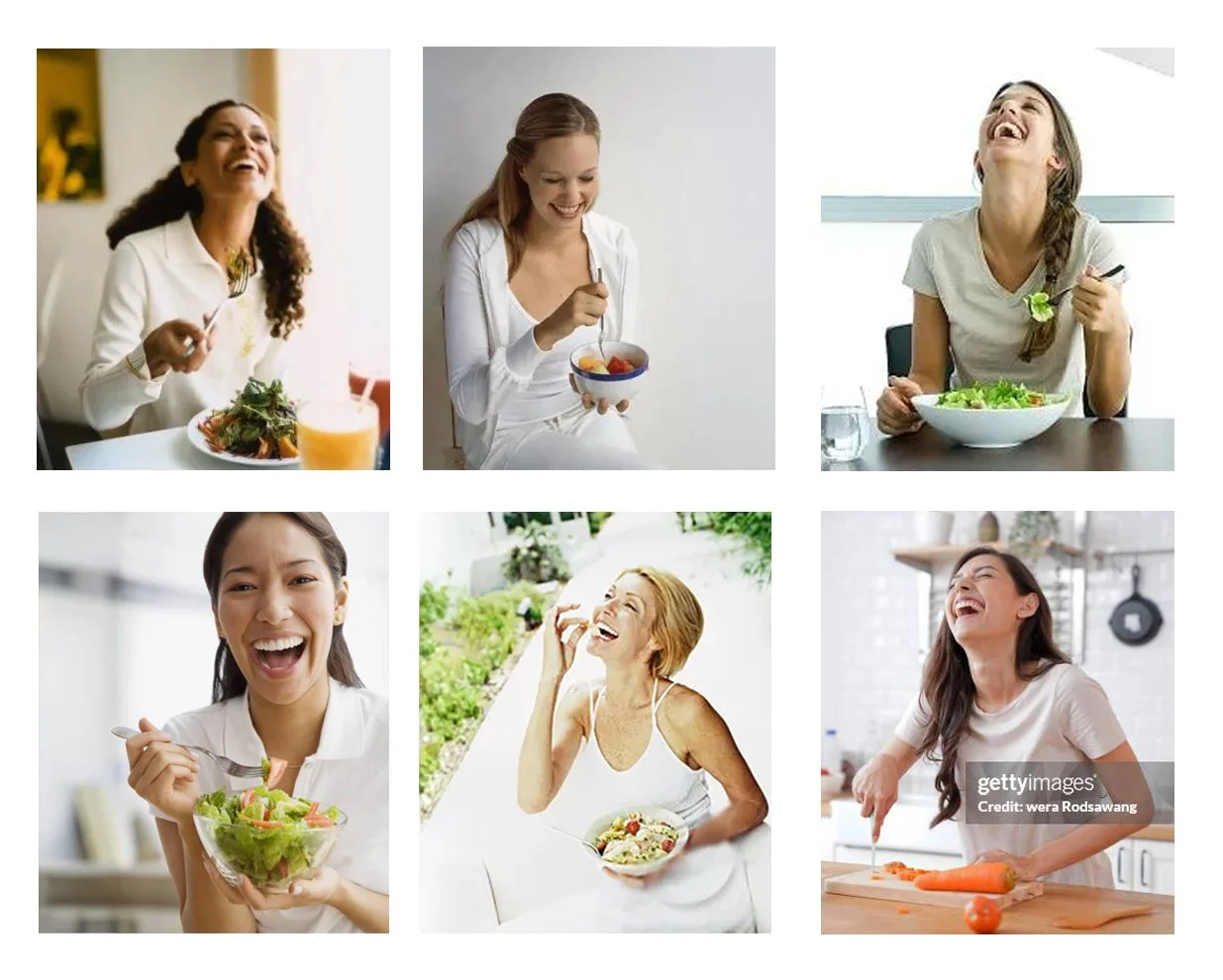

Birth of a meme ‘Women Laughing Alone With Salad’

In 2011, Edith Zimmerman posted 18 stock photos with zero commentary. Just images of women laughing manically, while eating salad. Alone. The post went viral because it exposed something everyone recognised: stock photography's bizarre, repetitive unreality.

The meme became a cultural touchstone. The internet had found the perfect symbol for generic commercial imagery that insults everyone's intelligence.

Now AI is generating the exact same garbage.

Training on Trash

Research published in the journal Patterns ran an experiment. Two AI models played visual telephone for 100 rounds. One generated an image, the other described it, repeat. No matter what prompt they started with, the systems converged on the same 12 visual motifs. Stormy lighthouses. Rainy night streets. Gothic cathedrals. The researchers called it "visual elevator music."

AI didn't learn to create images. It learned to recreate stock photo clichés at scale.

More of the same? Examples of AI outputs found online.

Everything Looks Like Everything Else

Stock photography created the problem. AI platforms automated it.

Tools that promise "custom brand creative at scale" are just AI algorithms deciding what's "good enough" based on pre-existing formulas. The results tend to be, well, formulaic. AI optimizing for what statistically performs across everyone else's campaigns.

If you're running brand images through platforms where AI makes the creative decisions, you get creative that looks like everyone else's because it learned from everyone else's.

Human intervention is the key differentiator. A creative director questions whether the formula fits your brand and whether this execution needs to break the pattern. AI-first platforms skip that step entirely.

OUTSMARTING Generic OUTCOMES

The difference between shooting real assets and generating everything comes down to this: real photography captures specific things in specific places. AI generates statistical averages.

Shoot a real space and you capture specific design decisions, specific materials, specific light quality. Those specifics create differentiation. Extend those real assets with AI and the variations maintain that specificity.

Pure AI generation works backward. It starts with the generic average and tries to add specificity through prompting. But you can't prompt your way out of stock photo energy when the model learned from stock photos.

The researchers found something else: even with diverse starting prompts, AI systems optimize for "what usually works" across their training data. They don't explore, they average. Creativity collapses into safe defaults.

Anyone with a trained eye can spot it. More importantly, audiences feel it. The imagery doesn't connect because it looks like it could be anywhere, for anyone. The result feels more generic than the generic images it learned from.

That’s why for Nothing Is Real, we go Hybrid. Real photography first. AI extension second. That sequence matters more than most brands realise, until it’s too late.